Context Engineering: Going Beyond Prompts To Push AI

Prompts were a parlor trick.

“Act as a world-class XYZ…”

Everyone had one. Everyone felt clever.

But here’s the truth:

Prompting was a party trick for small context windows.

Now? We’ve got million-token windows.

And suddenly the game isn’t “what do I ask?”

It’s: what does the system know when it starts thinking?

Welcome to context engineering—

the real infrastructure behind intelligent work.

The Shift That Changes Everything

Prompt engineering = front-end flair.

Context engineering = backend architecture.

Prompts talk to the model.

Context feeds it judgment.

This is the difference between:

- Playing with LLMs

- And building systems that think

Want better results?

Stop writing better prompts.

Start engineering the worldview the model operates in.

Context Engineering Is What Serious Builders Are Doing

Let’s be precise.

You don’t need 10 clever personas.

You need:

- The right docs.

- The right history.

- The right system messages.

- The right tools.

- In the right order.

- Delivered at runtime.

- Inside the token limits.

This is stack design, not sentence polishing.

Context Engineers Don’t Type. They Architect.

Their job isn’t to “sound smart.”

It’s to make intelligence possible.

They:

- Curate what the model sees

- Layer tools and retrieved data

- Compress without losing the thread

- Measure for hallucination drift and signal loss

And they understand this:

You don’t scale intelligence by typing better.

You scale it by structuring context so well, the system starts teaching itself.

Context Windows: The Real Limit You Need to Understand

Context windows have a hard cap—measured in tokens.

A token is roughly ¾ of a word. “ChatGPT” is two: “Chat” and “GPT.”

This matters because everything scales with token count:

- Latency

- Cost

- Performance

- Memory

And here’s the constraint most people forget:

LLMs only know what they were trained on—and what fits in the context window.

Which means everything rides on what you feed them.

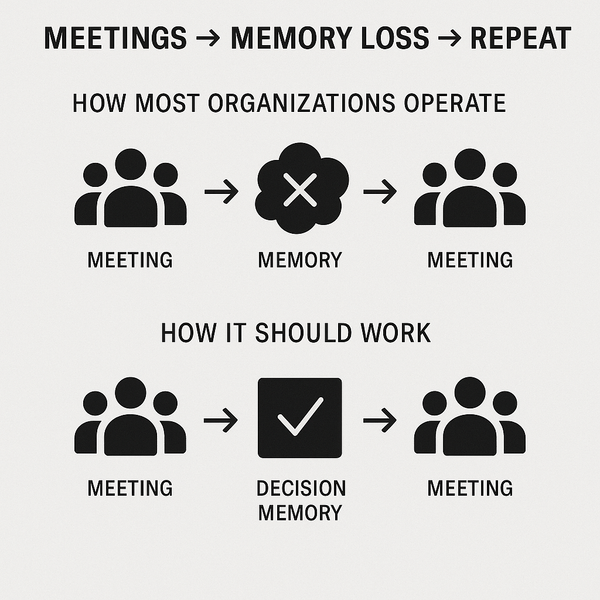

How AI “Remembers” Your Conversation

Ever noticed how ChatGPT and Claude “remember” the thread?

They don’t actually remember.

They just keep sliding your past questions and their answers back into the context window—like the movie Memento, where the guy tattoos clues on his body to function.

No long-term memory. Just short-term recursion.

Useful? Yes. Scalable? Not quite.

RAG: Teaching AI on Demand

Retrieval-Augmented Generation (RAG) changed the game.

You retrieve relevant docs, insert them into the context window, and suddenly the model can “learn” on demand—no retraining needed.

This is how modern AI systems get up to speed:

Not by being smarter—by seeing more.

But even this depends on what and how you retrieve. Garbage context still gets you garbage output.

Tool Calling: Extending Capability Without Retraining

Tool calling lets you give the illusion of intelligence by offering external functions:

- Search

- Weather

- Code execution

- Financial lookups

But here's the catch:

LLMs can’t actually call the tools.

They just output a plan—then your app executes on their behalf.

That output (tool + input) gets added back into the context window like a note passed in class. The LLM sees the result, reprocesses, and continues.

It’s clever.

But it’s still context juggling—not memory. Not autonomy.

Why This All Matters Now

With million-token context windows live, you can now feed an AI:

- An entire product backlog

- A full compliance manual

- Months of chat transcripts

- Your whole codebase

And it can reason across all of it—in one go.

But it doesn’t do that automatically.

You still have to decide what goes in, what stays out, and how it’s structured.

That’s not prompting.

That’s context engineering.

And it’s becoming the only thing that separates “pretty good” from “this changes everything.”

Why This Is the New Moat

The models are converging.

Everyone will have GPT-5, Claude, Gemini, and whatever xAI drops next.

You won’t win on access.

You’ll win on how you feed them.

If you control:

- The architecture of memory

- The design of context

- The surface area of tools

- And the cadence of evaluation...

Then you control the outcome.

One Last Thought

Prompt engineering made you clever.

Context engineering will make you dangerous.

This isn’t about telling AI what to do.

It’s about building the system that ensures it does the right thing—at scale, without stalling, and under pressure.

The prompt engineering era taught us to talk to AI. The context engineering era is teaching us to think with AI.

The prompt era is over.

This is infrastructure now.

Design accordingly.

More soon,

Gage Batten

Under Construction

How work is being rebuilt in real time