The Great AI Overfit: Why General Tools Are Losing to Specific Context

There’s a pattern playing

You try a general-purpose AI tool.

It answers questions, writes decent copy, summarizes a meeting.

It’s helpful but shallow.

You spend more time rewriting than delegating.

You still need to explain things it should’ve understood.

Why?

Because it wasn’t trained for your context.

And in real work context is everything.

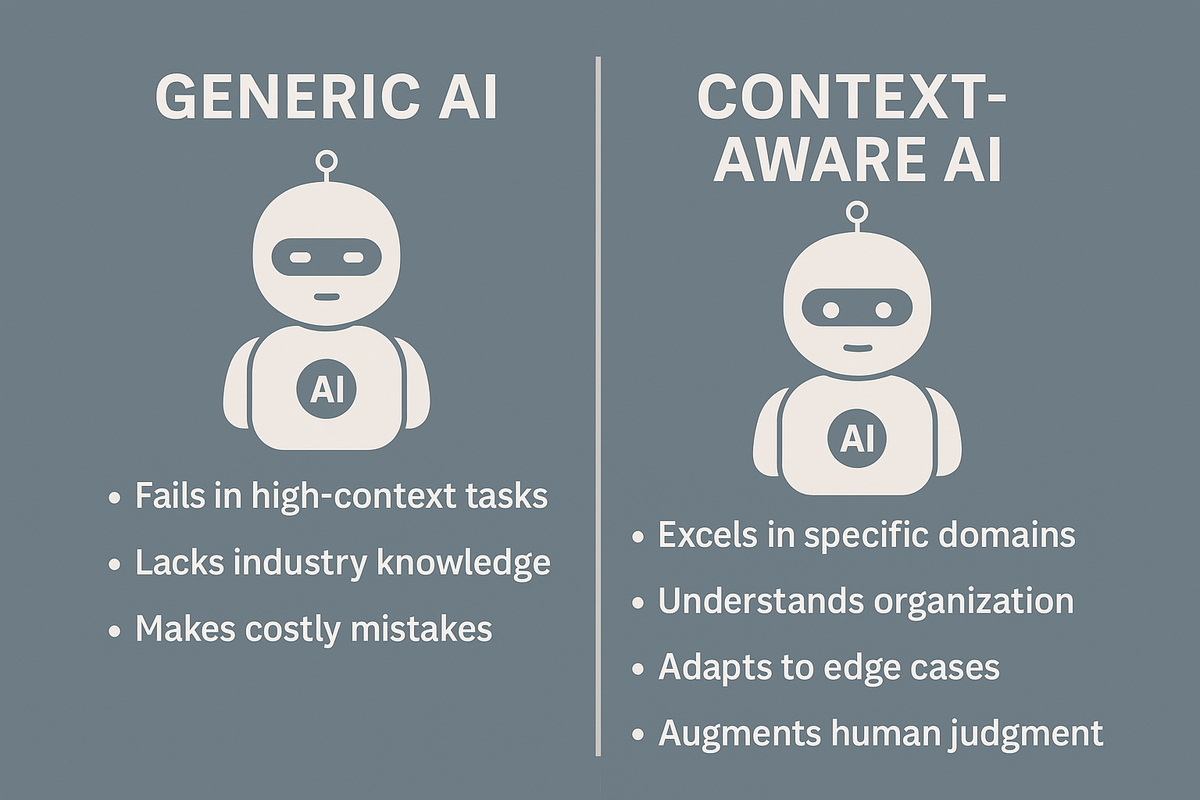

General-purpose AI is overfitting to everything and underperforming everywhere

When a model is trained on the entire internet, it doesn’t know what not to say.

It has seen too much.

It assumes too little.

So it hedges.

It rambles.

It gives you answers that sound good—but don’t move the ball forward.

You get a confident summary with the wrong emphasis.

A task list that ignores your org’s actual constraints.

A response that fits a template but misses the nuance.

That’s overfit.

It’s what happens when the model knows a lot but understands nothing about you.

Real leverage is coming from narrow, high-context tools

The AI that rewrites your punch list correctly?

It was trained on your past projects, your subs, your sequencing logic.

The AI that actually helps with contract review?

It knows your risk tolerances, your negotiation posture, and your client base.

The AI that can route RFIs?

It’s seen how your team tags disciplines, phrases scopes, and loops in engineering.

The tools that win aren’t smarter in general.

They’re smarter about you.

The false promise of the generalist AI

When AI hit the mainstream, the value prop was:

“Talk to it like a person. It can help with anything.”

That’s true and that’s the problem.

Because anything = nothing in most operational environments.

The tool doesn’t know what matters.

It can’t infer your priorities, your sequencing logic, your risk posture, or your workflows.

So it generates.

But it doesn’t decide.

And worse: it adds friction to workflows that were already misaligned.

This is where vertical AI wins

The next wave of value won’t come from another chat assistant.

It’ll come from tools built around:

- Specific problems

- Specific data models

- Specific team structures

- Specific language

- Specific process loops

AI becomes useful when it speaks your company’s dialect—not just English.

This is why companies are starting to:

- Train internal models on their knowledge base

- Fine-tune for field documentation

- Use retrieval systems over chat interfaces

- Prioritize accuracy over generality

Because they’ve realized:

The cost of being “kind of helpful in general” is operational drag in practice.

What this means for teams building with AI

If you’re evaluating tools right now, ask:

- Does this AI understand our business logic?

- Does it preserve domain-specific language and reasoning?

- Can it be constrained to just our workflows, or is it hallucinating based on Reddit?

- Do we want magic tricks or durable productivity?

And if you're building?

Stop trying to make your AI “do everything.”

Start making it understand one thing extremely well.

The future isn't general intelligence. It's embedded intelligence.

The tools that will win over the next 2–3 years won’t be the ones that talk best.

They’ll be the ones that fit best.

Fit the workflow.

Fit the domain.

Fit the way the team actually works.

AI that adapts to you will beat AI that tries to impress everyone.

If you're seeing this play out in your company or building a tool that lives on one side of this line let me know.

This is where the gap between demo and deployment gets real.

More soon,

Gage Batten

Under Construction

How work is being rebuilt in real time