The Metrics We Track Are Still Pre-AI

We’re tracking the wrong things.

Not because the old metrics were wrong—but because they were built for an environment that doesn’t exist anymore.

Most KPIs in use today were designed for human bandwidth, manual work, and slow feedback loops. That’s the frame.

But once AI enters the system on the input side, the decision side, or the delivery side those measurements start breaking down.

Pre-AI metrics reflect pre-AI constraints

Let’s take a simple example:

- Time-to-complete

- Cost-per-output

- Touches-per-ticket

These made sense when humans were doing 100% of the work.

When tasks were linear. When communication was slow.

When meetings were necessary because the data lived in people’s heads.

But now?

- Some of that work is being pre-processed by AI before anyone sees it.

- Decisions are being made faster—but not always better.

- "Touches" can include bots, assistants, agents, even API calls that don’t involve a person at all.

So what are we really measuring?

And more importantly—what are we incentivizing without realizing it?

Why legacy KPIs quietly kill momentum

You can’t track speed without checking if quality held.

You can’t celebrate lower ticket volume without confirming your AI didn’t just deflect problems, leaving the user stranded.

You can’t measure “productivity” by volume if the inputs are synthetic and the outputs don’t map to impact.

Here’s the risk:

The system gets smarter, faster, more autonomous—

but you’re still using lagging indicators built for slower, dumber environments.

That’s how companies look “efficient” on paper while quietly leaking value.

New systems demand new questions

AI-native work requires a shift in what you track, how often you track it, and who it's for.

Some categories to rethink:

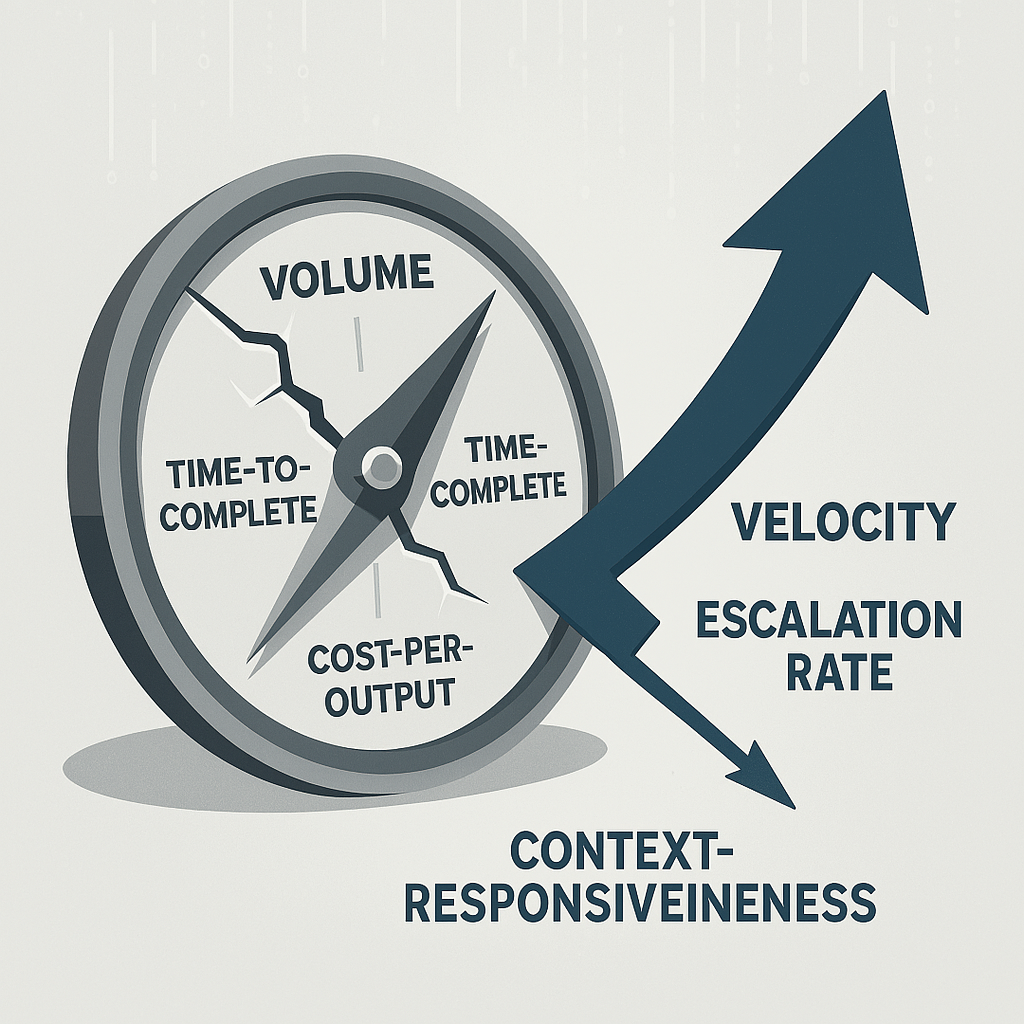

1. From volume to velocity-through-feedback

How fast are loops closing? Not just how many units moved.

2. From completion to escalation rate

Where does the AI need help? How often does a human need to step in—and why?

3. From task count to context-responsiveness

Is the system adapting in real time? Or just automating bad processes faster?

4. From cost-per-output to cost-per-outcome

AI lowers unit cost. But did it create value—or just more content, more steps, more noise?

5. From static dashboards to living telemetry

The idea of a weekly KPI review is already too slow.

You need real-time signal that feeds back into your workflow design.

What to do about it

- Audit your KPIs: What were they designed to measure? Are those constraints still relevant?

- Reframe "success": Shift from quantity metrics to systems health metrics. How adaptive is the system? How often is a human needed?

- Instrument the edge cases: Where AI breaks down is where the real value is hiding. Measure exceptions, escalations, weird patterns.

- Build in qualitative checks: Not everything is a number. Some things need to be interpreted. That’s where your team’s judgment kicks in (see Issue #8).

This is the hard part of real transformation.

It’s not the tools—it’s the measurement layer.

You don’t change how people work unless you change what they think they’re being graded on.

And most businesses haven’t updated the grading rubric since before AI showed up.

If you're starting to rethink your operational metrics or feel like your dashboards don’t match what’s actually happening you're not alone.

This is where most companies stall.

Learn to fix it before the next layer gets built on sand.