The Work that Remains Part 2: From Execution to Interpretation

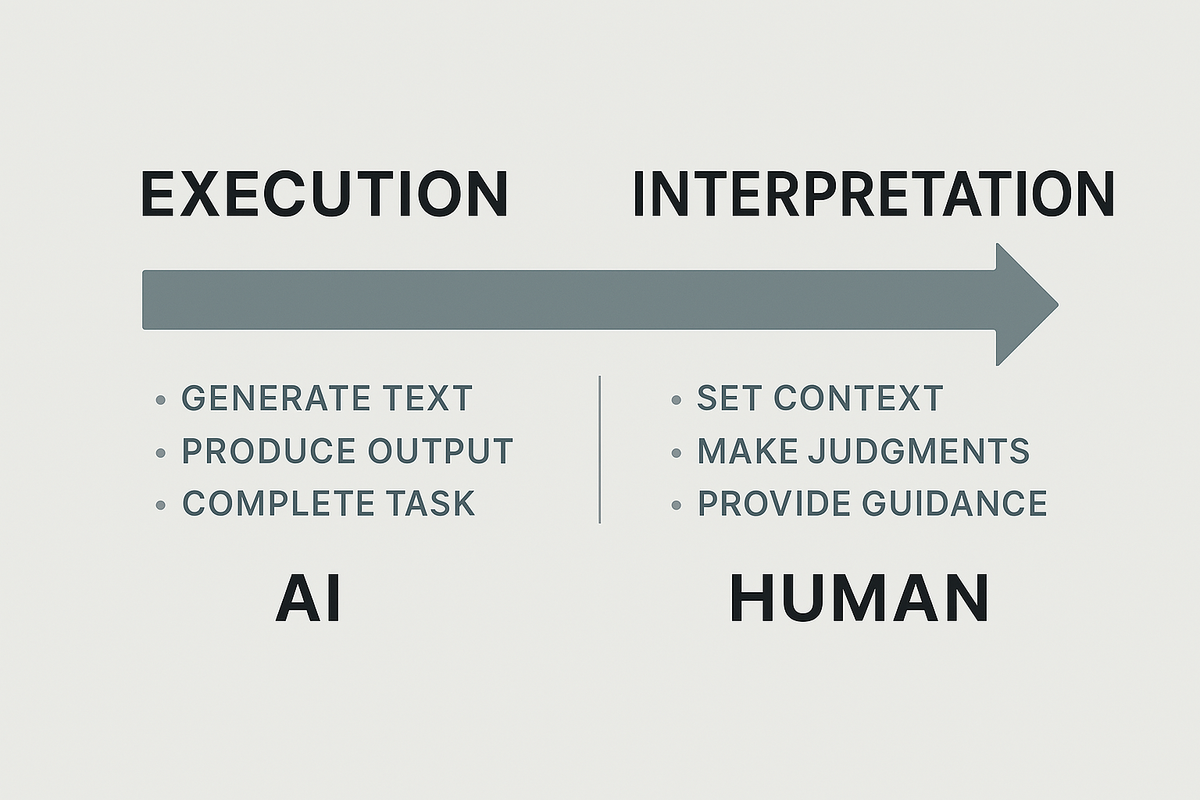

AI can execute. Humans need to interpret.

We used to define value by output.

How fast can you write it?

How well can you build it?

How many can you ship?

But now AI can do the output.

Now AI writes it faster. Builds it cleaner. Ships it without blinking. At Scale.

The question has changed.

From: Can you do the thing?

To: Can you frame what matters and interpret what comes next?

Execution used to be the job

Execution was everything.

You were trusted because you delivered.

Quick turnaround. Precise edits. No mess.

But AI is changing the ratio:

Execution costs are dropping.

Interpretation costs are rising.

You don’t need more people who can write the thing.

You need people who know what the thing should be, when it matters, and how to make the tradeoff when two good options are in conflict.

What’s missing is interpretation.

Judgment.

Context.

Not because it's trendy—but because without it, nothing means anything.

Why interpretation is the real work now

AI can generate 100 responses.

Only a human knows which one aligns with strategy, tone, and timing.

AI can write a report.

Only a human can spot the contradiction, feel the risk, ask the question no one asked.

AI can suggest next steps.

Only a human can say: “We shouldn’t do any of these.”

Interpretation is no longer a bonus skill.

It’s the thing that makes the work useful.

The new value chain

What’s really changing

| What used to matter | What matters now |

|---|---|

| Getting the task done | Knowing which task is real |

| Explaining the decision | Spotting what’s not being said |

| Optimizing what you have | Reframing what’s possible |

| Finishing the sprint | Asking why we’re racing at all |

AI is fast.

But fast doesn’t mean right.

Interpretation isn’t about opinion—it’s about structure

If you want to increase your value in an AI-native team, learn to:

- Spot the real constraint

- Frame the tradeoff

- Ask the higher-order question

- Define the criteria for “right”

- Sense when the data is clean, but the answer is wrong

- Choose which rule to follow, and which one to bend

Execution is increasingly a system.

Interpretation is still a person.

How to work inside the fog

- Don’t measure by completion

Measure by meaning. Did this change how the team sees the problem? - Get good at holding tension

Not everything needs to be resolved. Some questions need to breathe. - Slow down when it’s most uncomfortable

Confusion feels inefficient. But it’s where the real work lives now. - Say what others won’t

Interpretation starts when someone’s willing to say,

“This doesn’t make sense. And maybe that’s the point.”

The future won’t be led by the fastest hands.

It’ll be led by the sharpest interpreters.

AI can output.

It can assist.

It can adapt.

But it still can’t ask better questions than you.

So the question isn’t what AI can do next.

It’s what you will still be trusted to make sense of—

when everything else is already done.

What will you stand for

when speed, scale, and surface-level answers

are no longer scarce?

What will be your edge

when confusion is the new default

and clarity is the rarest skill in the room?

What do you see

that the machine never will?

Stay Tuned for Part 3: The Return of the Generalist

Here is a snippet

You don’t need more specialists.

You need someone who can walk into the fog, hold five variables in tension, and still make the call.

The generalist isn’t back because the world got simpler.

They’re back because it didn’t.

When everyone has tools, the edge belongs to those who can connect dots across systems, timeframes, and disciplines—and still act with taste.

AI is optimizing every function.

But who’s asking whether the functions still matter?

More soon,

Gage Batten

Under Construction

How work is being rebuilt in real time